HYVE VUE

Intelligence Infrastructure Platform

A production-grade AI operations platform for teams that run AI at scale. Monitor every provider request in real time, enforce budgets before costs spiral, and maintain complete auditability — all from a single self-hosted dashboard.

Built by Vibe Software Solutions and released free to the world. HYVE VUE is the infrastructure layer that should exist under every AI-powered product — visibility, control, and accountability from day one.

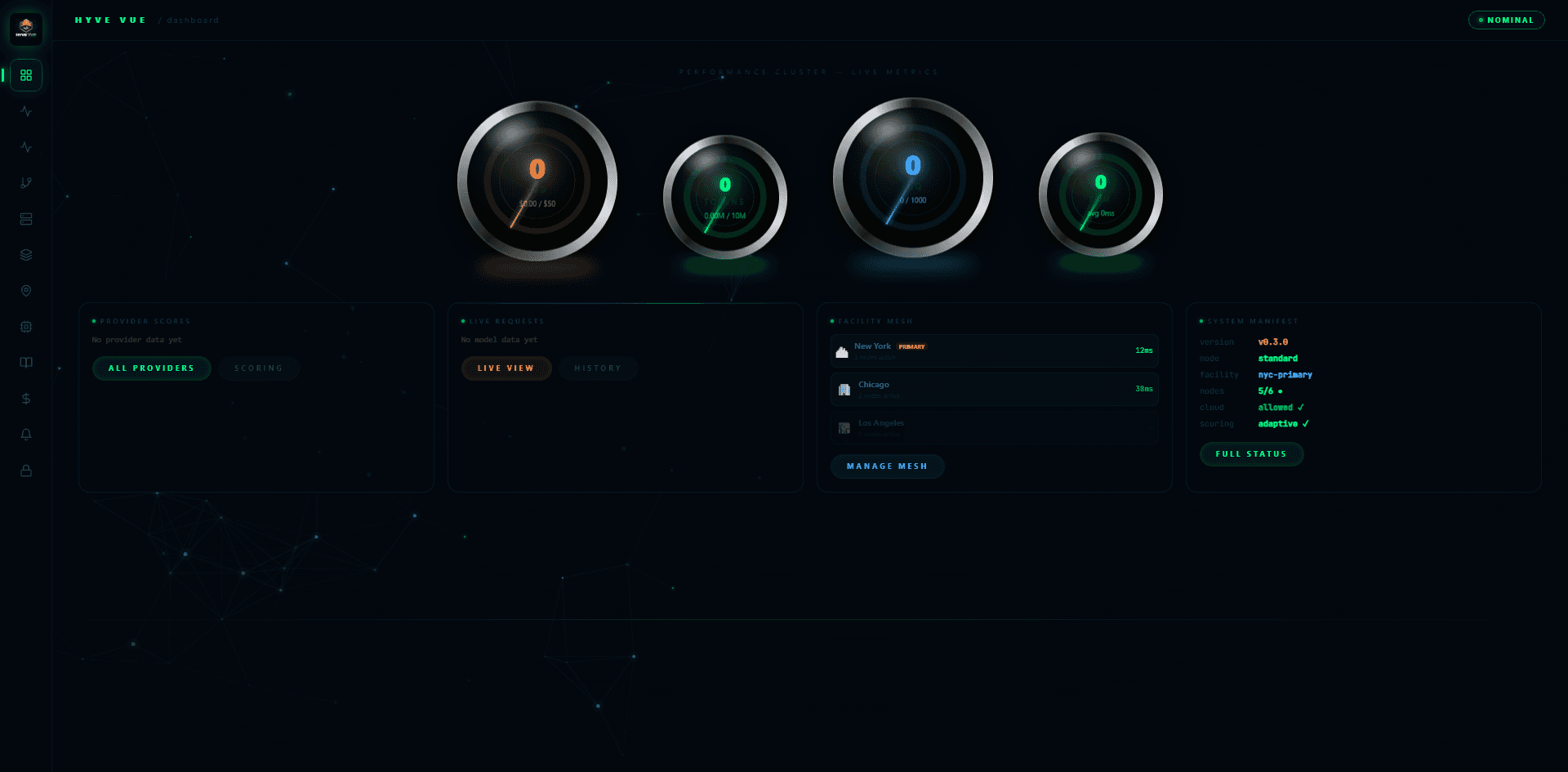

What it looks like

running on your machine.

Dashboard · Performance Cluster · Facility Mesh

Login · Neural Canvas Background

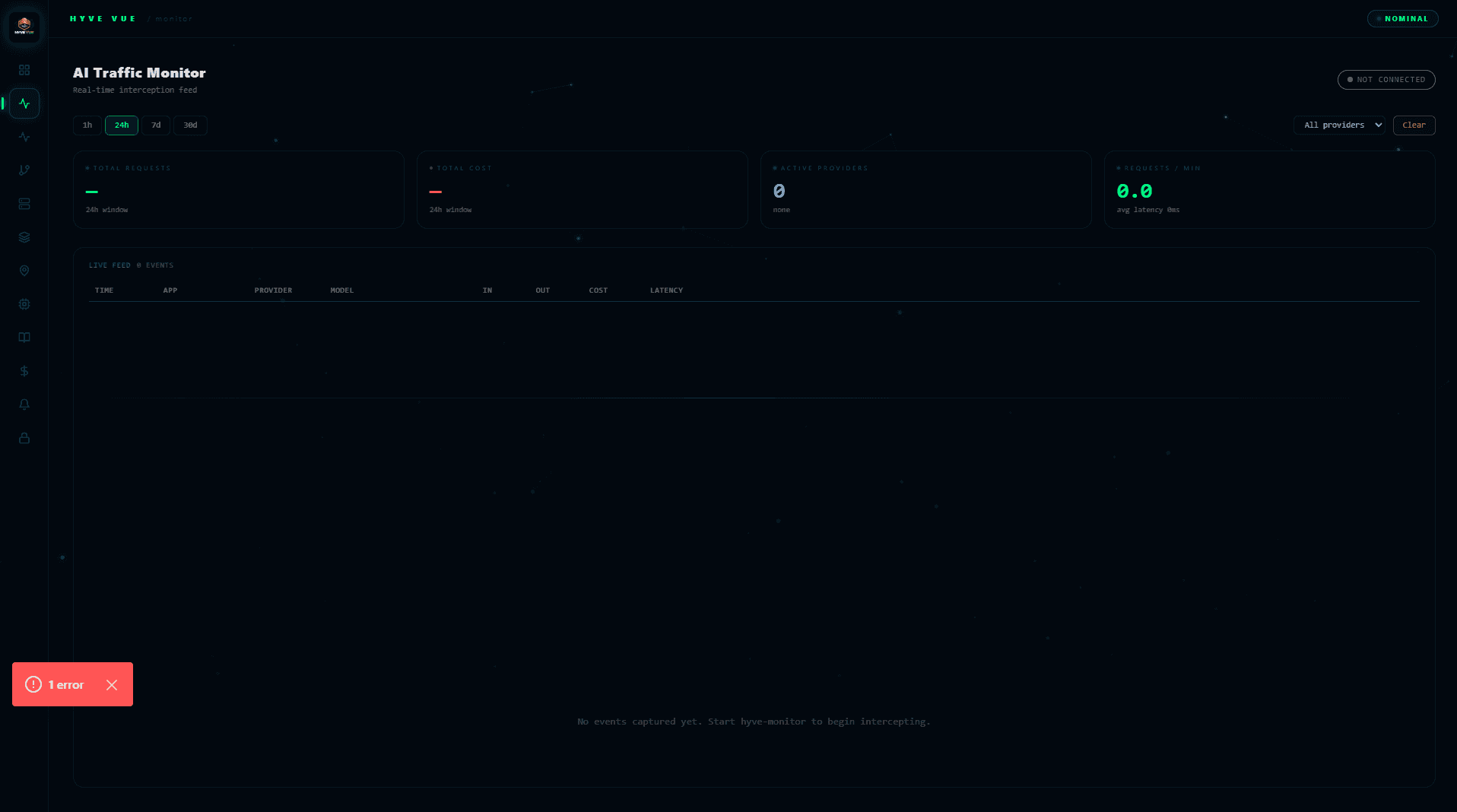

AI Traffic Monitor · Live Request Feed

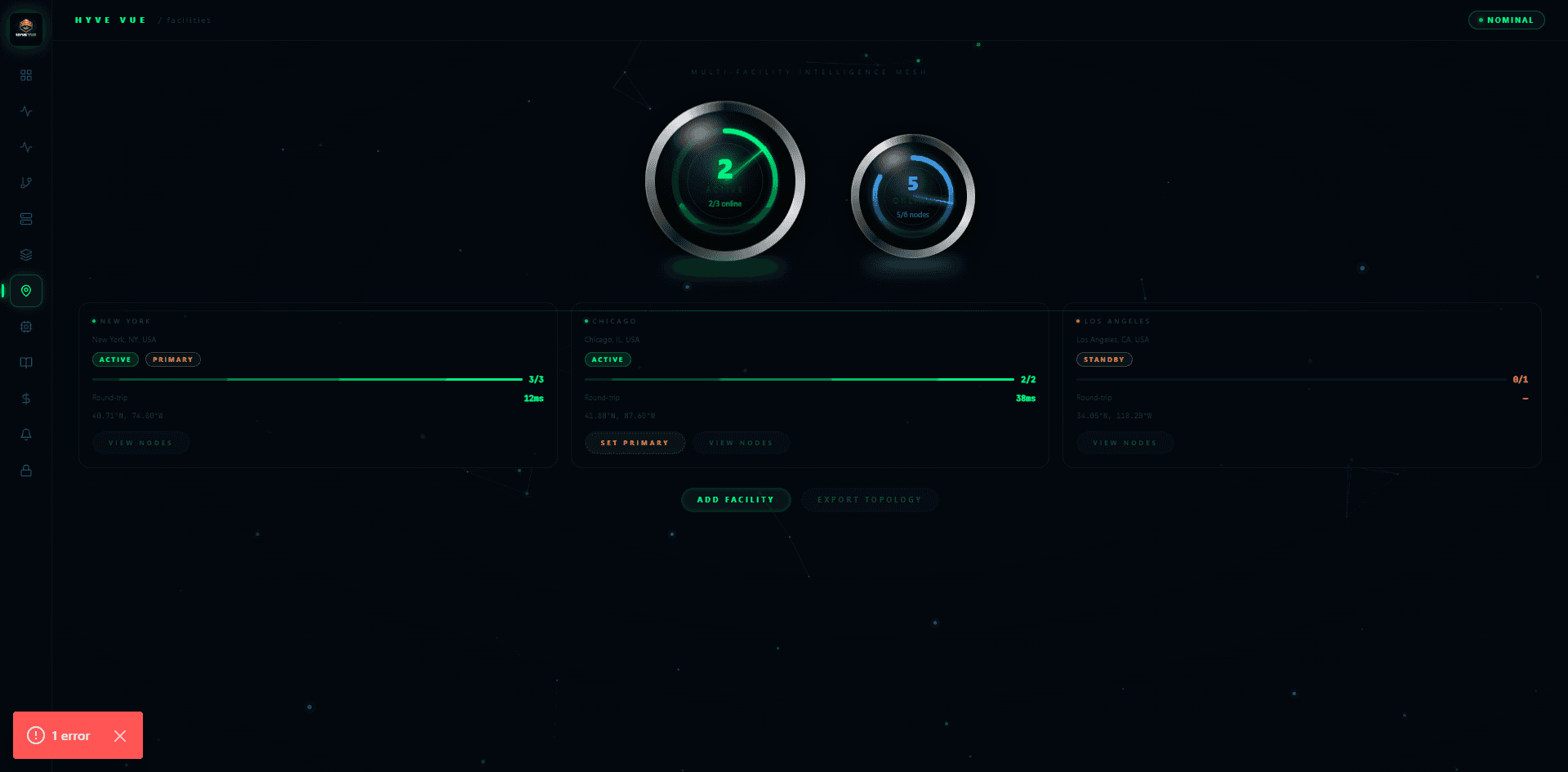

Facility Mesh · Multi-Region Node Topology

Your AI stack has no

visibility layer. Until now.

Most teams running AI in production are flying blind. They know which providers they're calling — but not what each one costs by department, which models are underperforming, where budget is being burned, or which requests triggered the spike at 2AM.

HYVE VUE fixes this. It sits between your application and your AI providers, ingesting every request event through a lightweight monitor key API. From there, it surfaces a real-time intelligence layer: live request feeds, provider-level cost analytics, department budget tracking, configurable alert thresholds, and a full immutable audit log.

Deploy it on your own infrastructure. No cloud dependency. No SaaS fees. No data leaving your perimeter. HYVE VUE is infrastructure you own.

Multi-Tenant Architecture

Isolate departments, teams, or entire organizations under separate tenants — each with their own budgets, providers, users, and analytics. One platform, zero cross-contamination.

Real-Time AI Monitoring

Live WebSocket feeds surface AI provider events as they happen. Track latency, token consumption, cost, and error rates across every model and endpoint in real time.

Provider Intelligence

Unified visibility across OpenAI, Anthropic, Google, and any custom provider. Compare cost-per-token, reliability, and response quality side-by-side — without switching dashboards.

Budget & Alert Engine

Set spending limits at the tenant, department, or provider level. Configurable threshold alerts fire before costs run away — not after.

Immutable Audit Logs

Every request, cost event, and configuration change is timestamped and permanent. Full replay capability for compliance reviews, cost audits, and incident investigations.

Role-Based Access Control

Five distinct roles — super admin, tenant admin, department manager, analyst, and auditor — each scoped to exactly the data and actions they need.

Production-grade.

Built to deploy anywhere.

Engineering Teams

Running multiple AI providers across products and need a single control plane for cost and reliability visibility.

Enterprise Organizations

Multi-department AI usage with compliance requirements — audit logs, role-based access, and spend governance built in.

AI-Native Startups

Moving fast and spending heavily on API calls. Budget alerts and provider analytics pay for themselves in week one.

Government & Defense

Self-hosted, air-gap-compatible, no external dependencies. Full data sovereignty with zero SaaS exposure.